There is something you only truly understand after spending many hours working with all these tools: no artificial intelligence can yet replace the judgement of someone who has already built up professional experience, made real mistakes, and knows how to distinguish between a superficial solution and a valid one.

I say this based on daily practice, not theory. I use various AIs on a regular basis, personally paying for several of them to truly understand their strengths, limitations and biases. And the more I use them, the clearer one conclusion becomes: today, AI speeds things up enormously, but it also requires us to be more vigilant.

Just this weekend, for example, I spent many hours building a website with the help of various artificial intelligences. The initial impression was promising: speed, apparent reliability, immediate responses, convincing code. But as the work progressed, something very familiar to those of us who have spent years solving complex problems emerged: AI often thinks it knows more than it actually does.

It makes authoritative suggestions even when it is wrong. It fixes one part and breaks another. It strings together solutions that seem coherent but do not always grasp the problem as a whole. In the end, I ended up dismantling much of what had been generated and returning to a more traditional, more manual, slower, but also more reliable approach.

And that experience sums up quite well where we stand today.

Artificial intelligence does not replace the senior professional; in fact, it empowers them. Because those who already possess sound judgement know when to accept a proposal, when to reject it, and when to detect that behind a seemingly brilliant answer lies an incomplete understanding. That is probably why the best current definition is not to think of AI as a substitute, but as an amplifier: it empowers the senior professional, but still requires human hands at the helm.

Added to this is an aspect that is often underestimated: no AI is neutral. They all carry inherent biases, cultural priorities, training models and design approaches that shape their responses. Governance is not just about choosing a powerful tool, but about understanding what worldview it embodies, what risks it introduces, and for which specific task it is best suited.

Because ultimately, in a serious professional environment, the difference is not made by who uses artificial intelligence the most, but by who knows best when to trust it… and when not to.

Artificial intelligence does not eliminate judgement: it puts it to the test.

I hope this overview has been useful. Not so that you’re left with a list of names, but so that the next time someone asks you ‘which one should I go for?’, you have something more interesting to say than just the name you’ve heard most often lately.

If tomorrow you sit down at your computer and have to choose one

We’ve reached the end. And the most useful conclusion I can give you is also the simplest: stop looking for the best AI. Start asking yourself which one best suits what you’ll need tomorrow.

If you just write emails and want quick help within your usual environment, Copilot will probably suffice if you use Office, or Gemini if you use Google Workspace. You don’t need anything more sophisticated for that.

If you do a lot of research and need answers backed by verifiable sources, Perplexity will transform your experience immediately.

If you work with your own documents, whether they’re reports, PDFs, contracts or presentations, NotebookLM is probably the most powerful workflow change you can make today.

If you want a general assistant for thinking, writing, coding or analysing without the hassle of choosing, ChatGPT remains the most balanced and versatile option.

If you write a lot and care about the quality of your text, Claude remains the quiet favourite among those who work with words on a daily basis.

If you want to explore what it means to have an AI that acts rather than just responds, Genspark deserves far more attention than it currently receives.

How this series of articles was written

I think it’s important to be honest about this. Especially in an article discussing artificial intelligence.

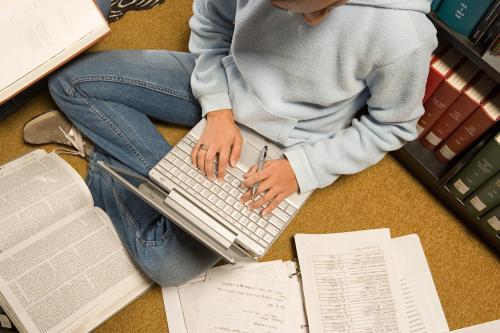

I didn’t write this text on my own. I wrote it with the help of several AIs, and quite intensively. I used ChatGPT to structure ideas and explore different approaches. Gemini to cross-check information and work with reference material. Claude to review the wording, refine the tone and improve coherence between sections. NotebookLM to analyse and cross-reference the sources and previous materials I had gathered. And Mistral at specific moments where I wanted a more neutral perspective or a second opinion on certain paragraphs.

There were a great many iterations. I didn’t write this article in an afternoon. It was a back-and-forth process: proposing, reviewing, discarding, rewriting, cross-checking with another tool, reviewing again. At some points I completely changed the approach after seeing how a different tool had framed it. In others, I rejected what the AI suggested because it sounded correct, but it wasn’t me.

Over time, I’ve refined a way of working which, with a nod to my creative alter ego, I call the Stainless Iteration Method. It works like this: upon finishing any deliverable with an AI, I ask it to self-assess on a scale of 1 to 10 and explain why it isn’t a 10. This forced self-assessment makes the model identify its own weaknesses: where arguments are flawed, where nuance is lacking, which paragraph sounds generic. I then take that same deliverable, including the assessment, to a different AI and ask it to evaluate it as well. The second one doesn’t start from scratch: it starts from a previous diagnosis that it can confirm, refute or refine.

The result is that you reduce the blind spots of each model. No AI really knows what it doesn’t know. But by cross-referencing evaluations, the blind spots of one are often visible to the other. It is, in essence, applying to AI the same logic that would lead any experienced editor to request a second read-through before publishing.

What I did do at every stage was review every paragraph, decide what to keep and what to discard, and take responsibility for what the text says. The ideas, the opinions, the anecdote about the weekend spent building the website, the closing sentence, the underlying structure: these are mine. The tools gave me speed, the capacity for rapid iteration, and a useful mirror in which to test my thinking.

That is exactly what I am trying to explain throughout this article: AI does not write for you. But if you know how to use it, it multiplies what you can do. And this text is, amongst other things, a practical example of that.