Generative artificial intelligence such as ChatGPT represents a major leap in the advancement of artificial intelligence. Before, artificial intelligence manipulated data. Now, it is also capable of manipulating our language, the main feature that separates humans from other living beings.

ChatGPT, the programme that allows you to ask questions on any subject and generates coherent answers, has taken the news by storm. It can write stories, poems, answer exam questions, summarise texts, and so on. You could almost say that ChatGPT is capable of passing the Turing test, which seeks to determine whether a machine can hold a conversation in natural language so convincingly that it is indistinguishable from a human being.

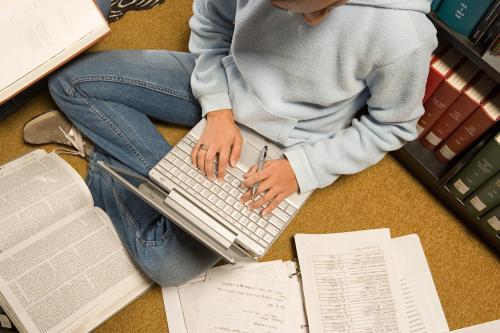

On the other hand, we have also seen that ChatGPT is able to make up things that are not true; so-called “hallucinations”. This is not a problem for a person who collaborates with ChatGPT to produce a text in a field that he/she masters well, as he/she will be able to distinguish truth from “lies”. However, it is a problem if the text, for example, is generated by a secondary school pupil who has to write a paper on climate change. Moreover, ChatGPT could generate a huge amount of disinformation (fake news) in a very short time. In the wrong hands, it could cause serious problems in societies and even influence democratic elections. ChatGPT is so good that even legal or medical professionals might be tempted to use it for their work, with all the consequences and risks.

With all these challenges, and the many more that I have not mentioned here, several initiatives have emerged that propose to put limits on (generative) artificial intelligence.

To move forward with the development and use of artificial intelligence, in this uncertain landscape of generative AI, here are seven practical guidelines for businesses:

1. For any use of artificial intelligence, apply a methodology for responsible use of artificial intelligence from design.

2. Learn to estimate the risk of an AI application by analysing the potential harm it can generate, in terms of severity, scale and likelihood.

3. Do not apply generative AI, such as ChatGPT, to high-risk use cases. If you want to experiment, do so with low-risk use cases such as summarising non-critical text.

4. If you still need to apply generative AI to a high-risk use case, be aware of all possible requirements coming from future European regulation.

5. Never share sensitive information with systems like ChatGPT, as you are sharing this information with third parties. More advanced companies may have their own instance that avoids this risk.

6. When using ChatGPT to generate content, always add a note of transparency. It is important that your readers are aware of this situation. If you have generated the content in collaboration with ChatGPT, make this also clear through a note. The person or company using ChatGPT is always responsible for the result (accountability).

7. And finally, do not use generative AI for illicit uses such as generating fake news or impersonation.

In summary, the fact that more and more organisations are reflecting on the potential negative impacts of using artificial intelligence is important, necessary and positive.