Artificial intelligence has been evolving for decades, but in recent years we have witnessed a particularly noticeable shift with the emergence of so-called creative or generative AI. Tools capable of producing text, images, music, or video have quickly moved from research laboratories to become an integral part of the daily work of designers, developers, and creative professionals.

To better understand this phenomenon, we spoke with Irene Ferrer, a strategic designer specializing in accessibility and artificial intelligence applied to design, about what creative AI really is, how it differs from other types of AI, and how it is beginning to transform creative processes and the development of digital products.

What is creative AI?

Generative AI describes a technological capability; these are models capable of producing new content from data: text, images, video, audio, or code. It works by analyzing enormous amounts of information to detect patterns and learn how words, shapes, sounds, or structures typically combine. Based on this learning, the system does not recall specific examples: it calculates which combinations are most likely and generates new variations that are consistent with what it has learned.

When we use a model to create an image, write text, or generate code, we are utilizing that generative capability.

Creative AI, on the other hand, emerges when that capability ceases to be used in isolation and becomes integrated into a workflow. It is not just about generating content, but about incorporating that capability into design, communication, or product development workflows. At that point, AI ceases to be a one-off tool and begins to form part of the creative process itself.

The distinction is significant because it also shapes professional profiles. Knowing how to use a generative tool is not the same as understanding how to integrate that capability into a process. At that point, AI ceases to be merely a content generator and becomes a system that expands the exploration of ideas, accelerates iterations, and opens new possibilities within creative work.

We only have to look at ourselves. People also create based on references: what we have learned, the influences that have shaped us, or the cultural context in which we live. Over time, we develop judgment, intention, and our own perspective. AI does not have that. What it does have is something else: an extraordinary ability to combine references on a massive scale and generate variations at a speed impossible for a human.

That’s why, rather than asking whether AI is creative, the interesting question is another: what happens when that generative capacity is integrated into human creative processes. That’s where so-called creative AI truly comes into play.

What are the key aspects of creative AI?

There are three aspects that are worth understanding clearly so as not to get lost in the noise.

The first is that it operates probabilistically. AI does not understand ideas the way a person would, but rather calculates which combination of words, images, or sounds is most likely to fit based on what it has learned. That explains why it can generate plausible results so fluidly, and also why it can make mistakes.

The second aspect is that we are still in a very early phase. I sometimes explain it simply: it’s like a baby who has learned to walk very quickly—even to run—but who still trips over things that seem simple. The evolution is real and accelerating, but we must not confuse speed with maturity.

The third aspect, and for me the most important one, is human-machine collaboration. AI doesn’t make decisions. The judgment, intention, and responsibility for what is generated remain human; when this is forgotten, the results are usually quickly apparent.

I believe the question is no longer what AI can do, but rather what part of the creative process we still want to remain human.

At the same time, we cannot ignore the elephant in the room. Creative AI also raises important debates about ethics and intellectual property, especially regarding the data used to train these models and the authorship of the generated content. For example, today it would be technically possible to train a model to write a text “in the style of” a specific author. And it’s reasonable to think that many writers wouldn’t be thrilled by the idea that a machine could replicate their narrative voice.

These kinds of issues are part of the current debate between technology, the cultural industry, and the legal framework, and will likely be one of the major topics we’ll need to continue addressing in the coming years.

How does it differ from other types of AI?

This is an important question because we sometimes forget that artificial intelligence is not something new. It has been used for decades to analyze data, classify information, or automate decisions. As Marta Peirano explains in The Enemy Knows the System, algorithms were already organizing much of what we see, consume, or decide long before we started talking about generative AI.

The main difference lies in the shift in approach. Until recently, we used AI to interpret the world of data. Its function was to detect patterns, predict behaviors, or automate decisions based on existing information.

Creative AI introduces something different: it allows for the generation of new content based on those same patterns. It is no longer limited to analyzing what already exists, but can produce texts, images, or solutions that weren’t there before. We could say that traditional AI tries to find the best possible answer to a problem. Creative AI, on the other hand, explores many possibilities. And that shift profoundly changes how it integrates into creative processes.

What are its main practical applications?

The most visible applications are emerging in fields where content generation and idea exploration are central, such as design, communication, product development, or education.

In digital design, for example, it allows us to explore visual or conceptual variations in minutes that previously took days. As a lab tool for rapid iteration, it’s extraordinary. I remember my first workshops with stakeholders, where we worked with paper wireframes to quickly rule out options and move the process forward; today, that same kind of exploration can be done in a matter of minutes, generating wireframes, flows, or initial visual proposals to iterate on rapidly.

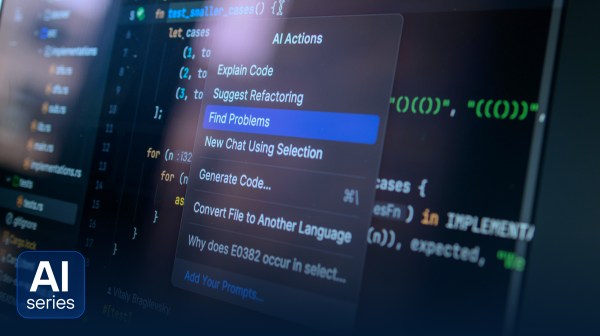

Where the change is becoming truly profound is in the relationship between design and development. Automation and tokenization within design systems are bringing these two worlds closer together in a way that was previously hard to imagine. Tools like Figma Tokens, documentation platforms like Zeroheight, or development environments like Storybook are helping to connect the designer’s work with that of the developer in a much more direct way. It’s not magic yet, but we’re getting closer and closer to a point where a prototype, a component, or an interface pattern can move almost directly into development and become part of the system used by technical teams. In that sense, the designer is moving ever closer to the realm of development, and the conversation between the two roles is becoming much more fluid and productive.

That said, it’s also important to be realistic. Not all companies can or should rely on AI in the same way. In early-stage exploration contexts, as is often the case in startups or during initial product phases, AI allows for rapid iteration, testing ideas, and validating concepts in the market with great agility. In more established products, the balance is usually different, because the product’s quality, consistency, and accumulated experience require more human judgment and greater care in every decision.

There is another distinct dimension where generative AI is also having a massive impact: visual creation for branding, communication, or the conceptual design of physical products. Here we’re talking about generating images, advertising campaigns, packaging, or visual prototypes with a level of realism that previously required large teams, photo shoots, or lengthy production processes.

Furthermore, all of this opens the door to something particularly interesting: personalization. Today we are beginning to see systems capable of generating experiences tailored to each user, from dynamic content to interfaces or pages that adapt to the context or preferences of each individual.

We are living in a very exciting time, with a vast array of possibilities. But there is one idea I think is important to emphasize: the tool alone is not enough. AI integrated into a clear creative process multiplies its value, while AI used without a process generates noise with a very polished presentation.

Since late 2022, we’ve seen a very visible acceleration of these tools, which have put capabilities once reserved for labs or large companies within reach of many professionals. What’s interesting now isn’t just which tools exist, but how they’re integrated into work processes.

When AI is judiciously incorporated into a creative or design workflow—and that involves deciding when a human steps in and when tasks are delegated to the machine—it ceases to be a technological novelty and begins to become a true ally.

How does it complement other new technologies?

Creative AI does not operate in isolation, but as part of a broader technological ecosystem.

It relies on cloud infrastructures that enable training and running models at scale, integrates with design and development tools, and is beginning to combine with technologies like augmented reality or virtual reality to generate more immersive experiences.

The role of edge computing is also growing—that is, models that run directly on the device—such as mobile phones or computers—without constantly relying on the cloud. This reduces latency, improves privacy, and enables faster, more contextual experiences.

Another particularly interesting area is accessibility. AI is already being used to generate automatic image descriptions, captions, or content adaptations, which can make information more accessible to a wider audience.

What examples are there?

Today, there are systems capable of generating text, images, video, music, animation, or code, and the ecosystem of tools changes very quickly: some evolve, others integrate into larger platforms, and others disappear within a few years.

That’s why, when people ask me for concrete examples or specific tools, I usually respond with a metaphor: what matters isn’t whether the spoon is made of wood or gold, because the goal remains the same: to eat.

Tools are just a moment in a much longer technological evolution. What truly endures is the creative process and our ability to use those tools to explore ideas, solve problems, and generate meaningful solutions.

My focus isn’t on implementing AI just because it’s a trend, but on designing workflows where that AI adds value. In the end, technology changes quickly, but what remains is our judgment.