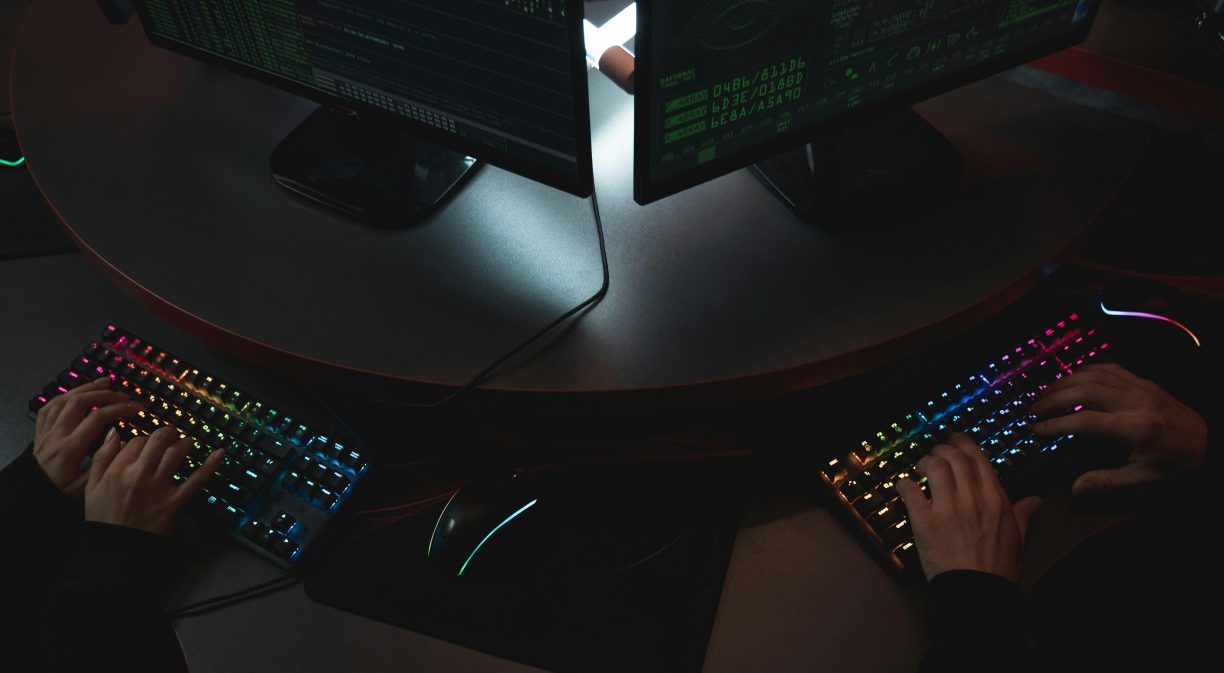

What is agentic AI for security?

Agentic AI is a shift from passive security tools to active, automatic defence systems. Agents are used to operate autonomously and work cooperatively with other agents to effectively manage different parts of security operations simultaneously. Far from simply informing human analysts, agentic AI has the ability to independently perform event investigation analysis, gather information from various sources, and in some cases, intervene quickly.

Current status and advantages

The cybersecurity sector is experiencing rapid adoption. Fifty-nine per cent of companies identify agentic AI as “work in progress”, meaning that 2025 could be a turning point for its widespread use. Large companies such as Google have already demonstrated its practical application through projects such as Big Sleep, which succeeded in uncovering real-world security vulnerabilities that could put users at risk.

Benefits include threat detection and response, improved application security through AutoFix capabilities, vulnerability management, and SOC team expansion, while eliminating alert fatigue and enabling efficient investigation.

Getting started: implementation guide

- Phase 1: Assessment and planning: Start by assessing your current security posture and identifying areas where autonomous AI agents can deliver the most value. Begin with low-risk tasks such as log analysis, threat intelligence correlation, or automated patch management for non-critical systems.

- Phase 2: Establish governance: Implement explicit policies on what AI agents can do autonomously and what requires human approval. Use robust audit logs and oversight mechanisms to track all AI agent actions to facilitate accountability and continuous improvement.

- Phase 3: Pilot implementation: Implement a pilot focused on a specific area, such as endpoint protection or network monitoring. Establish quantifiable success metrics, such as improved response time, reduced false positives, and overall threat detection performance.

- Phase 4: Scale and integrate: Once the pilot programme has been marked a success, gradually scale it out to other security domains. Focus on enabling different AI agents to work collaboratively, sharing threat intelligence and coordinating response actions for maximum effectiveness against complex, multi-vector attacks.

Best practices and tips

Security considerations:

- Apply the concepts of least privilege to AI agents that possess transient, scoped credentials.

- Encrypt all data managed by AI systems and create special data retention policies.

- Implement rigorous and regular oversight of AI decision-making systems.

Implementation tips:

- Start small with well-defined use cases to gain experience and confidence.

- Invest in integration capabilities using standardised communication protocols.

- Maintain transparency by implementing AI practices that you understand.

- Prepare for continuous learning with appropriate feedback mechanisms.

- Plan for robust error handling and recovery mechanisms.

Human oversight: Although agentic AI operates autonomously, human oversight remains essential. Establish clear escalation procedures and ensure that security personnel can override AI decisions when necessary. Regular training should keep teams up to date on the capabilities and limitations of the AI system.

Conclusion

Agentic AI in security offers unprecedented capabilities for autonomous threat detection and response. Success requires careful planning, gradual implementation, robust governance, and maintaining the right balance between AI autonomy and human oversight. Organisations that implement these systems thoughtfully, while addressing security and privacy considerations, will gain significant advantages in defending against ever-evolving cyber threats.