I’ve been applying this method systematically in my work for years now. I didn’t devise it all at once: it emerged gradually, born of frustration. Frustration with answers that sounded great but didn’t stand up to a second look. Hallucinations—from the AI, and then almost my own after so much iteration. With texts that seemed complete but then had significant gaps. With code that worked in the example but failed in the real-world scenario.

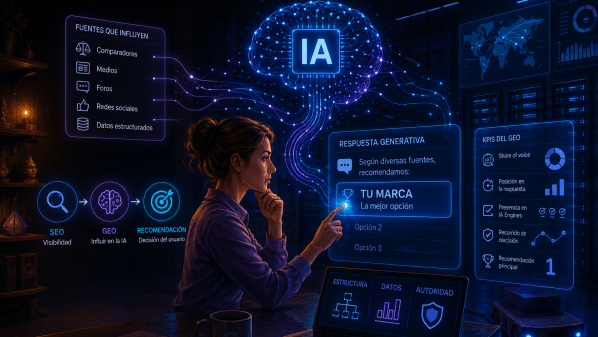

The conclusion I reached wasn’t that AIs are bad. It was that each one has its own blind spots, and that none of them alerts you to them on their own initiative. So I started asking them.

The result is what I call the Stainless Iteration Method: a workflow in which forced self-assessment and cross-checking between different models become part of the process, not an optional verification at the end.

The name is a nod to my creative alter ego, Asier Inoxidable, under which I write fiction, poetry and music. But the methodology is entirely practical. I use it for articles, for analysis and research into trends and technology, for html code, for public presentations. For any deliverable with non-confidential documentation (data security is very important, given European AI law, the GDPR, etc.) where the result matters.

An AI that gives its own work a 7 and explains why it isn’t a 10 is providing you with editorial feedback it wouldn’t give if you simply asked, ‘improve this’.

Why the first response is almost never the best

When you ask an AI for something, what you receive is its best attempt based on the information it has at that moment. Not its best possible attempt. Its best attempt given that prompt, that context and that session.

The problem is that this first attempt comes wrapped in a confidence that isn’t always justified. AIs don’t usually say ‘I’m not sure about this’ or ‘I’m missing context here’. They tend to complete, to fill in the gaps, to sound confident even when they’re improvising.

This isn’t a design flaw that they’re going to fix any time soon. It is a structural feature of how language models work: they are trained to generate coherent and plausible text, not to actively point out their own gaps.

The problem of blind spots

Every model has its own blind spots. Claude tends to be very organised and precise, but sometimes errs on the side of excessive caution or adopts a tone that is too formal and doesn’t suit every context. ChatGPT is very flexible and creative, but may be more prone to including information that sounds correct without actually being so. Gemini has a very broad perspective, but sometimes lacks depth on very specific topics. Mistral is efficient and direct, but sometimes misses the mark when it comes to nuances in Spanish-speaking cultures.

None of them are fully aware of their own blind spots. But something very interesting happens when you ask them directly: if you force them to self-assess using explicit criteria, they begin to identify weaknesses they would not have mentioned spontaneously.

And when you take that diagnosis to another model, the second AI isn’t starting from scratch. It starts with a map of potential issues that it can confirm, refute or expand upon. That is what makes cross-checking far more powerful than simply ‘asking the same thing elsewhere’.

Self-assessment as an editorial tool

The core idea of the method is simple: before accepting any deliverable, ask the AI to assess it. Not to improve it, but to assess it. Ask it to tell you what mark it would give it and why it isn’t the top mark.

That nuance matters a great deal. If you ask ‘how can I improve this?’, the AI tends to make superficial adjustments. If you ask ‘what mark would you give it out of 1 to 10 and why isn’t it a 10?’, the AI is forced to identify what’s missing, what’s superfluous, and what could be done better. It’s a question that triggers a different mode of analysis.

Over time, I’ve learnt that an AI that gives itself an 8 and explains why it isn’t a 10 is usually more useful than one that simply suggests improvements. Because the first one tells you where to look. The second one just gives you more text to evaluate.