- In the 1940s, Isaac Asimov enunciated the three laws of robotics.

- Subsequently, with the evolution of this branch of technology, other authors have added new postulates.

- Could the eruption of a volcano in Indonesia in the early 19th century have influenced Asimov’s formulation of the laws of robotics more than a hundred years later?

Asimov’s Three Laws of Robotics

The Soviet-born American biochemist Isaac Asimov (1919 or 1920-1992) is one of the most influential figures in robotics, and he stood out in the literary world thanks to a large number of published works on science fiction, popularization and even history.

In one of his stories, Vicious Circle (1942), he enunciated the three laws of robotics, considered the ethical and moral basis on which to develop autonomous systems:

- A robot shall not harm a human being, nor by inaction allow a human being to be harmed.

- A robot shall comply with orders given by human beings, except for those that conflict with the first law.

- A robot shall protect its own existence to the extent that this protection does not conflict with the first or the second law.

Law Zero: Isaac Asimov’s Fourth Law of Robotics

The author himself added four decades later, in 1985 in his work Robots and Empire, the fourth law of robotics, called Law Zero.

In it, Asimov stated the following:

- A robot cannot cause harm to mankind or, by inaction, allow mankind to come to harm.

Frankenstein, at the origin of Asimov’s laws

But why do these laws arise?

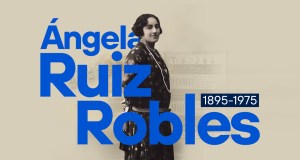

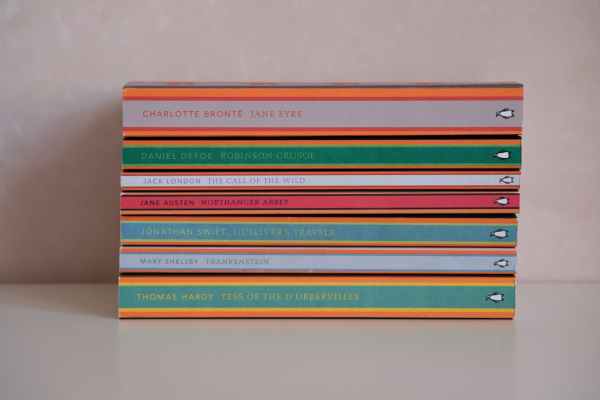

The New York-based writer coined the term “Frankenstein complex” to summarize the fear that humans might feel that beings of their creation would rise up precisely against their creators, as occurred in the novel Frankenstein or the Modern Prometheus by British writer Mary Shelley.

As a curiosity, the germ of this novel is found in a meeting that Shelley and her husband, Percy Shelley, had with Lord Byron (Ada Lovelace’s father) and his doctor, the also writer John Polidori, in Geneva in 1816, the well-known year without summer due to the consequences of the eruption of an Indonesian volcano months before.

Faced with the inclement weather and to avoid going out in the open, Byron challenged them to write a horror story each. And from there came the origin of Frankenstein for Shelley; in addition, the origin of the romantic vampire as a literary subgenre also came from this meeting between friends, with Polidori’s Vampire.

So who knows… without the eruption of the Drum perhaps Frankenstein would not have been written, and the concern about how it rebelled would not have led to Asimov’s enunciation of the laws of robotics.

Post-Asimov laws of robotics

Responsible robotics

But returning precisely to these laws of robotics, the enunciation from a theoretical perspective several decades ago leads to the question of how they can be practically applied or in what way they can be updated in the face of advances in robotics and artificial intelligence, which although similar are not the same thing.

An example of updated applications (and more focused on the practical than on the theoretical/literary plane of Asimov) would be that of Robin Murphy and David D. Woods in the 2009 article Beyond Asimov: The Three Laws of Responsible Robotics.

These three laws of responsible robotics are:

- A human should not deploy a robot without the human-robot working system meeting the highest legal and professional standards regarding ethics and safety.

- A robot must respond to humans in a manner appropriate to the human’s role.

- A robot should be endowed with sufficient contextualized autonomy to protect its own existence as long as such protection provides for easy transfer of control to other agents in a manner consistent with the first and second laws.

Ethical Principles for Robot Designers, Builders and Users

Back in 2011, the Engineering and Physical Sciences Research Council (EPSRC) and the Arts and Humanities Research Council (AHRC) in the UK published their five ethical principles for robot designers, builders and users:

- Robots should not be designed solely or primarily to kill or harm humans.

- Humans, not robots, are the responsible agents. Robots are tools designed to achieve human goals.

- Robots should be designed to ensure their safety.

- Robots are artifacts; they should not be designed to exploit vulnerable users by evoking an emotional response or dependency. It must always be possible to distinguish a robot from a human being.

- It must always be possible to find out who is legally responsible for a robot.

Satya Nadella

In 2016, Microsoft CEO Satya Nadella proposed rules for artificial intelligence:

- It must help humanity and respect its autonomy.

- It must be transparent.

- It must maximize efficiency without destroying people’s dignity.

- It must be designed to preserve privacy in an intelligent way.

- It must have algorithmic accountability.

- It must be protected against bias.

Other principles to be taken into account in the rules for artificial intelligence are:

- It must also be sustainable, taking into account the long-term environmental and social impact.

- It must be secure and resistant to attacks and vulnerabilities.

- It must be fair, avoiding discrimination and promoting equity in society.

Conclusion

Since they were enunciated by Asimov in the literary field in 1942, the evolution of technology in general and of robotics and AI in particular, has meant that the laws of robotics (and even of artificial intelligence) have had to adapt to the new realities that have arisen over time.