AI agents are transforming the way we interact with systems, data and processes, and we provide them with a great deal of information: email passwords, social media credentials, database access and access to file systems. This new layer of automation introduces a risk factor that is not always addressed in sufficient depth: the exposure of data in use.

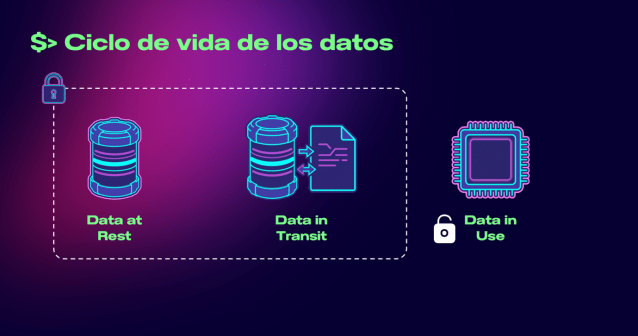

Unlike data at rest (those protected in databases) and in transit (those encrypted in communications), data in use – those that are processed in the memory – are usually unencrypted during their execution.

In the case of agents, we’re providing them with access to our email, our calendar, our social media, our company databases and our file systems. Collectively, they’re passwords that are raw in the memory when they’re used. If the host is compromised, they can read the data that aren’t encrypted.

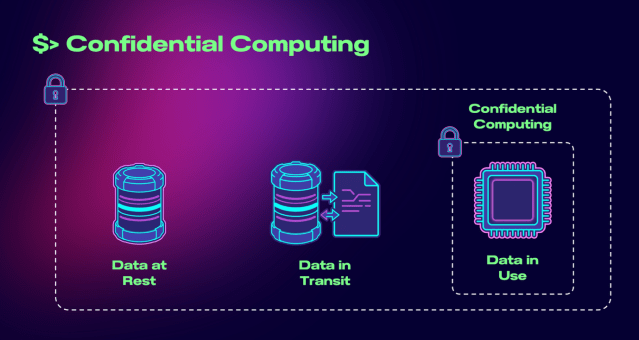

This is the moment at which the paradigm of Confidential Computing, one of the key cybersecurity trends in the coming years, comes into play.

This approach can:

- Process encrypted data, even during their execution.

- Isolate workloads in secure environments within the processor (TEEs – Trusted Execution Environments).

- Reduce the surface area of the attack, even in cases of insider threats and compromised hosts.

Technologies such as Intel SGX, AMD SEV and ARM TrustZone enable these protected environments, within which the data are decrypted solely in secure enclaves and never exposed to the operating system, cloud provider or potential attackers.

Furthermore, mechanisms such as the attestation can verify whether the environment is trustworthy before any sensitive information is processed, adding an extra layer of assurance in critical scenarios such as payments, digital identity and personal data processing.

This was one of the subjects that Telefónica Innovación Digital recently addressed at RootedCON, one of the leading European cybersecurity forums, at which Pablo González, head of the Future Cybersecurity Lab team, and I shared our vision of the emerging risks associated with agents and the need to upgrade the data protection models.

Within a context in which AI agents have increasing capabilities and access, the design of secure architectures can no longer be limited to protecting storage and communications.

At Telefónica Innovación Digital we’re working at the intersection of AI and cybersecurity to anticipate these challenges and develop solutions to ensure that these technologies can be adopted in a secure and scalable manner.

The future of computing lies in the constant protection of data. And this necessarily includes when they’re in use.